Inceptions, as a service.

For your agents.

Plant a fact. Watch the labyrinth grow. Recall the path. mazemaker is the inception engine for AI memory — a self-building knowledge graph, spreading activation, dream consolidation, conflict supersession. Not a vector wrapper. A memory system that remembers how the pieces fit together.

In one minute… a lot can happen.

Even the worst, fatal nightmares — an entire conversation lost to a context-window reset. Every fix, every preference, every name, every path you mentioned three weeks ago: gone in the time it takes you to refill a coffee.

Mazemaker fixes that. Plug it in, and your agent gets a brain that…

-

Remembers what you tell it. Preferences, decisions, fixes, the path of that file you mentioned three weeks ago.

-

Connects related ideas. Like a real notebook with cross-references, not a search box.

-

Reflects while you sleep. Overnight, it strengthens what matters and notices new connections.

-

Updates itself when you change your mind. Old facts get superseded, not duplicated.

That’s it — the rest of this page goes deeper the further you scroll.

Don’t want to install anything? A managed hosted endpoint runs at

v2.mazemaker.dev

— sign up, point your agent at the MCP endpoint, done.

Not ChatGPT Memory. A Brain Layer.

Vector search retrieves nearby text. Mazemaker follows relationships, supersedes stale facts, consolidates overnight, and can explain why a memory surfaced. The difference is not a percentage. It is a phase change — questions vector databases cannot answer by construction become routine.

Vector databases treat memory as a flat sphere of disconnected documents. Mazemaker builds a labyrinth. Every memory is a node. Every relationship is a weighted edge auto-discovered at insert time. Your agent does not search the cloud — it walks the labyrinth. Spreading activation propagates outward from a starting node with attenuation, exactly the way human associative recall works. Hop-2 reasoning — the questions a cosine search literally cannot answer by construction — goes from R@10 0.00 to 1.00. Not a thirty-percent improvement. A phase change.

The biological-sleep-inspired dream engine runs three phases overnight. NREM replays recent memories and strengthens the edges that fired together. REM bridges isolated nodes that never met but probably should. Insight detects communities and crystallises summary memories from clusters. Post-dream synthesis on facts unreachable from any single memory: structurally 0.00 → 0.43 R@10. Memory gets denser, not noisier, every night. No competing product runs autonomous consolidation.

Every recall returns the activation trace — the path the search walked, the edge weights it followed, the confidence at each hop. Your agent can debug its own retrieval. You can see why a memory surfaced instead of trusting a black box. The graph is queryable, not just searchable. No other memory product offers this surface; the rest stop at "here are the top-k documents".

We submitted the entire benchmark suite — including the negative controls that must fail when the relevant mechanism is removed — to GPT-5.5 via the codex CLI. Eight rounds. The first two rejected the suite outright. By round eight, every concrete objection was closed by code change, not by argument. Round eight verdict: unconditional yes — no residual caveat. Every prompt and every verdict is committed verbatim in the repository. This is not how you build a wrapper. This is how you build a category.

The Numbers That Matter

Every row comes from the v8 audit benchmark summary, with controls that must fail when the mechanism is removed.

Answer reachable only through A -> B -> C edges. Vanilla cosine cannot solve it by construction.

Collapse proves traversal is load-bearing, not the embedding model accidentally helping.

Facts inferable only after consolidation become reachable after dream cycles.

Newer contradictory facts supersede stale ones instead of duplicating noise.

Concept-mode distractors pile up; the graph still holds continuity.

Real prose n=200: lean beats skynet by +0.18 R@5 and drops dead-weight channels.

Eight Audit Rounds. No Residual Caveat.

Lexical leakage; broken dream suite; no baseline.

Topic-word leakage; anchor collisions; wrong import.

Source fixes pending verification.

Conditions satisfied with four caveats.

Real-text mode and lean preset shipped.

Lean beat skynet; one dream sample-size caveat remained.

Dream lift +0.43 at scale. No residual caveat.

The Engine Inside

One core, three integration shapes: Hermes plugin, MCP server, standalone library.

Semantic storage

FastEmbed ONNX with intfloat/multilingual-e5-large writes 1024d vectors, then links nearby memories into weighted graph edges.

remember -> embed -> SQLite INSERT -> cosine kNN -> connections

Recall pipeline

CUDA matmul when available, numpy fallback otherwise. Multi-channel retrieval fuses semantic, BM25, entity, temporal, PPR, and salience.

query -> FastEmbed -> GPU/CPU cosine -> RRF fusion -> ranked memory

Spreading activation

mazemaker_think uses BFS or Personalized PageRank. PPR is the ranking-quality channel; removing it costs -0.13 MRR.

source memory -> graph walk -> decay -> activation trace

Conflict supersession

Contradictory updates fuse or mark stale memories, preserving revision history instead of poisoning recall with duplicates.

new fact -> entity + semantic overlap -> supersede -> history

Dream Engine Three-Phase Consolidation

Triggered after 600s idle, after 50 new memories, manually through tooling, or as a standalone daemon.

Replay 100 recent memories

Run spreading activation, strengthen active edges by +0.05, weaken inactive edges, prune dead edges below 0.05.

Bridge isolated memories

Find 50 isolated memories, search similar unconnected nodes, create bridge connections at similarity x 0.3.

Store communities

Detect connected components, identify bridge nodes, materialize dream insights and derived cluster memory.

Benchmark-Driven Defaults

Semantic + entity + PPR. Drops BM25, temporal, and salience because real-prose ablation showed they were dead weight or actively harmful.

Rank-calibrated [0,1] floor replaces the old raw score floor that lived around 0..0.05 and could silently nuke recall.

Personalized PageRank is the load-bearing ranking channel; semantic is the load-bearing recall channel.

LLM-Callable Surface

Mazemaker exposes six MCP tools. The Hermes provider surfaces the core four schemas; dream controls are available on the Memory class and the daemon path.

Store with conflict detection

Persist facts, decisions, code notes, labels, and metadata. Auto-embed and auto-connect into the graph.

Core MCPSemantic search

Search memories by meaning and fuse optional retrieval channels through Reciprocal Rank Fusion.

Core MCPActivation traversal

Start from one memory and traverse graph neighborhoods with BFS or PPR ranking.

Core MCPGraph statistics

Inspect memory count, graph density, connection topology, and strongest associations.

Core MCPForce consolidation

Run all phases or target NREM, REM, or Insight manually when using the full Memory class surface.

Memory classDream telemetry

Inspect sessions, phase outcomes, insights, strengthened edges, bridges, and pruning output.

Memory classEmbedding Backends Auto-Priority

The Backend Stack

One rootless Podman pod, four containers, shared netns. The backend at v2.mazemaker.dev issues

a 24-hour JWT and a per-tenant license — and never sees a single byte of memory content.

Everything else runs on your machine, on your disk, in your kernel.

+------------------------------------------------------------------+

| rootless podman pod your machine |

+------------------------------------------------------------------+

| wonderland AES-256-GCM vault |

| vault-key = HKDF(JWT, hw-fingerprint) |

| derived at runtime, never on disk |

+------------------------------------------------------------------+

| mazemaker-mcp SQLite WAL + weighted graph + dream engine |

| six retrieval modes MCP / SSE |

| no outbound network during recall |

+------------------------------------------------------------------+

| embedding-worker FastEmbed -> ST -> TF-IDF -> hash (1024d) |

| optional OpenRouter proxy for free tier |

+------------------------------------------------------------------+

| license-client Ed25519 verify 24h JWT 7d grace |

| 6h heartbeat with anonymous counts only |

+------------------------------------------------------------------+

|

v heartbeat + usage meter only

+----------------------------------------+

| v2.mazemaker.dev |

| issues JWT counts tool calls |

| never sees a byte of memory content |

+----------------------------------------+

wonderland

AES-256-GCM encrypted vault. The vault key is derived at runtime via HKDF-SHA256(JWT, hardware-fingerprint) — it lives in memory only, never written to disk, and rotates every JWT refresh.

JWT + fingerprint -> HKDF -> AES-256 key -> GCM seal/open

mazemaker-mcp

The full retrieval engine: SQLite WAL, weighted graph, dream consolidation, six retrieval modes. Speaks MCP over SSE on the pod-internal loopback. No outbound network calls during recall.

localhost:7791 MCP/SSE · ./data/ SQLite WAL

embedding-worker

FastEmbed ONNX as default, sentence-transformers and TF-IDF as fallbacks, plus an optional OpenRouter proxy for the free tier so users without a GPU still get hosted-quality vectors.

CPU FastEmbed -> ST GPU -> TF-IDF -> hash 1024d

license-client

Verifies the Ed25519-signed JWT, posts a 6-hour heartbeat with anonymous usage counts, and grants a 7-day offline grace period. The fingerprint binds the license to the machine without any account credential round-trip.

Ed25519 verify -> 24h JWT · 7d grace · 6h heartbeat

The backend ledgers that a tool was called, never what was stored. Memory content stays inside the pod boundary, encrypted at rest by wonderland.

Pure user-namespace Podman with Quadlet .pod and .container units. No Docker daemon, no root, no VM, no SSH — just systemctl --user.

The same pod ships to AWS, GCP, Hetzner, or your laptop. Only the Cloudflare tunnel container changes when you swap providers — the four memory containers do not.

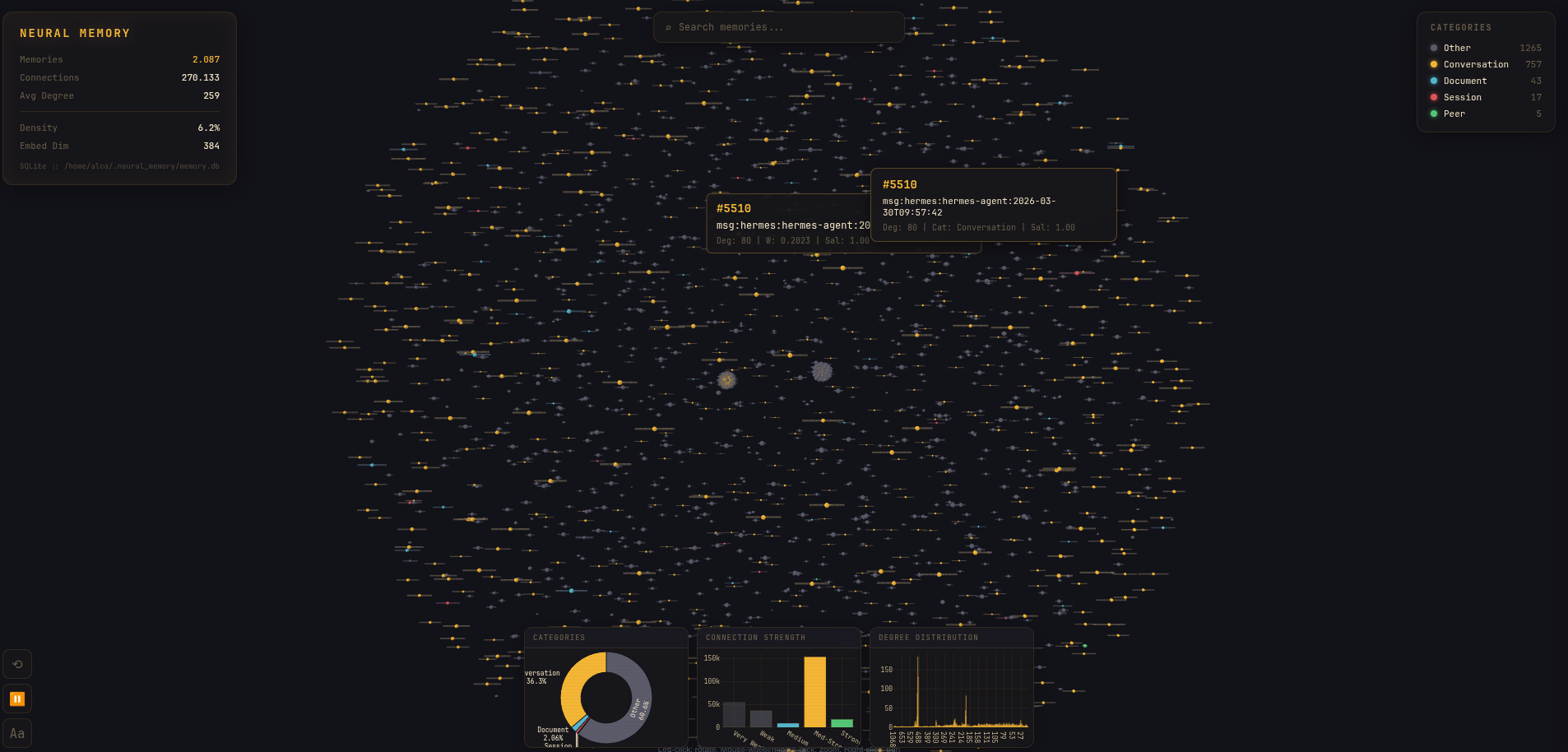

Knowledge Graph Observatory

The adapter ships with the mental model made visible: memories, connections, density, categories, degree distribution, and graph shape.

Simple Pricing

Local FastEmbed runs on your CPU at zero cost. Bring your own embedding key on paid tiers — we never mark up provider compute. We charge per tool call or a flat license, never both.

SQLite WAL

Local FastEmbed CPU or managed Cloudflare AI pool. Email + phone + hCaptcha + fingerprint gate; one machine.

- 50 tool calls / day

- 500 memories

- FastEmbed CPU (offline)

- Managed CF AI pool

- Local SQLite WAL

SQLite WAL

Stripe Metered. Unlimited calls and memories, BYOK embeddings to any supported provider. No monthly minimum.

- Unlimited tool calls

- Unlimited memories

- BYOK Jina / OpenAI / Voyage

- BYOK Together / CF / FastEmbed

- Stripe Metered billing

Postgres + pgvector

Flat-rate predictability for daily heavy use or single-machine teams. BYOK any supported provider.

- 5,000 recalls / day

- 50,000 memories

- Priority license refresh

- Dashboard + usage history

- Dream consolidation nightly

Postgres + pgvector

Custom quotas, optional self-hosted license server, BYOK-HSM, SLA, dedicated support, audit log export.

- Custom memory + call quotas

- Self-hosted license server

- BYOK + BYOK-HSM

- SLA + dedicated support

- Audit log export

All tiers run the same Mazemaker engine. The difference is quota, support depth, and whether we host the license server (Enterprise only). BYOK embeddings stay on your machine — we don’t see your provider keys.

Quickstart Three Steps

Sign up, paste one curl-bash, point your agent at localhost. The whole local-pod stack — wonderland AES-256 vault, mazemaker-mcp, embedding-worker, license-client — lands as rootless Podman Quadlet units in under a minute.

Open the onboarding wizard — email verify, Cloudflare Turnstile, optional passkey enrolment,

fingerprint challenge. You walk out with an mzm-* API key bound to the device

you ran it on, plus a one-shot install command pre-filled with that key.

https://mazemaker.dev/onboard/

The dashboard hands you a one-shot snippet pre-filled with your key. It lays down the

four Quadlet units, generates the device keypair, and pulls the images. No Docker,

no sudo, no VM.

curl -fsSL https://mazemaker.dev/install.sh | \

bash -s -- \

--remote https://mazemaker.dev \

--key mzm-your-key

# wonderland up at 127.0.0.1:8765/sse

# mazemaker-mcp pod-internal

# license-client heartbeat 6h

# embedding-worker FastEmbed → ST → TF-IDF

Your agent talks to wonderland over loopback — content is AES-256-GCM-sealed before it ever crosses a network boundary. The backend never sees a byte.

{

"mcpServers": {

"mazemaker": {

"type": "sse",

"url": "http://localhost:8765/sse"

}

}

}

Don’t want to install anything?

Point the same MCP client at the hosted endpoint. Same tool surface, your data lives on our side. Bring your own embedding key, or use the managed FastEmbed CPU lane on the free tier.

{

"mcpServers": {

"mazemaker": {

"type": "sse",

"url": "https://v2.mazemaker.dev/sse",

"headers": { "Authorization": "Bearer mzm-your-key" }

}

}

}

Build the maze.

Your agent finds the way.

Persistent semantic memory, graph reasoning, dream consolidation, and audited benchmark lift for agents that need continuity.